I’ve had this small Atomic Pi server for a while now. And I use it for all kinds of testing, learning, tools, and host a couple of apps on it. It was well worth the $40 spent on it.

I’ve also given a small explanation on it in one of my previous posts here https://kmitevski.com/samba-ntfs-drive-sharing-on-linux/

But, of course, the server would be nothing if I don’t have Kubernetes running on it :).

So for this purpose, I’ll present to you here all the steps and commands needed in case you want to create and install Kubernetes in a similar way.

All the commands and tools are run on Ubuntu 20.04.1 LTS.

Kubeadm

I’ve tried a lot of different small-scale and lightweight K8s flavors, microk8s, K3s. But this time I went to try the more ‘native’ way of creating a Kubernetes cluster and bootstrapping it using its very on kubeadm tool.

Now if you are not familiar with it, kubeadm is a tool that makes it easier to bootstrap a Kubernetes cluster.

It’s great since it takes care of deploying all the needed moving parts that go under the hood, and provides you with a simple approach to create your very own clusters.

Creating the cluster

Prerequisites

There are some prerequisites that will need to be taken care of first before kubeadm can step in and make our lives easier.

First and foremost is enabling the iptables to see bridged traffic. And you can check if the br_netbridge the module is loaded with:

$ lsmod | grep br_netfilter

If you don’t get any output it means that the module will need to be enabled, and you can do so with the following commands:

cat <<EOF | sudo tee /etc/modules-load.d/k8s.conf

br_netfilter

EOF

cat <<EOF | sudo tee /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sudo sysctl --systemVerify with the lsmod command again.

$ lsmod | grep br_netfilter # br_netfilter 28672 0 # bridge 172032 1 br_netfilter

The next step would be to open the required ports that Kubernetes uses. However, if you haven’t set any custom firewall or rules, there shouldn’t be anything blocking you from skipping this step.

The required ports are 6443, 2379-2380, 10250-10252 for the control plane. And if you have additional worker nodes, on them the ports 10250 and 30000-32767 should be freed.

In my case, there wasn’t any need to specifically open up any port.

Installing the container runtime

For the runtime, there are a couple of them to choose from. But probably you will be most familiar with Docker.

Although Docker, since version 1.20 on Kubernetes is deprecated as a container runtime.

And because of that, I set up the cluster to use containerd as the other next in line CRI option.

Again, prerequisites are needed for the CRI as well.

Much similar to the previous commands:

cat <<EOF | sudo tee /etc/modules-load.d/containerd.conf overlay br_netfilter EOF sudo modprobe overlay sudo modprobe br_netfilter # Setup required sysctl params, these persist across reboots. cat <<EOF | sudo tee /etc/sysctl.d/99-kubernetes-cri.conf net.bridge.bridge-nf-call-iptables = 1 net.ipv4.ip_forward = 1 net.bridge.bridge-nf-call-ip6tables = 1 EOF # Apply sysctl params without reboot sudo sysctl --system

Update the packages lists and install containerd:

$ sudo apt-get update -y $ sudo apt-get install containerd -y

Configure containerd with:

$ sudo mkdir -p /etc/containerd $ containerd config default | sudo tee /etc/containerd/config.toml

And restart the service:

$ sudo systemctl restart containerd

After running all of the above commands, check the status of containerd. It should return that it’s active and running.

Installing kubeadm, kubelet and kubectl

Up next is to (finally) install kubeadm, and the kubelet and kubectl.

Install the https transport, certificates and curl

$ sudo apt-get install -y apt-transport-https ca-certificates curl

Download the public signing key:

$ sudo curl -fsSLo /usr/share/keyrings/kubernetes-archive-keyring.gpg https://packages.cloud.google.com/apt/doc/apt-key.gpg

Add the Kubernetes repository to the sources list:

$ echo "deb [signed-by=/usr/share/keyrings/kubernetes-archive-keyring.gpg] https://apt.kubernetes.io/ kubernetes-xenial main" | sudo tee /etc/apt/sources.list.d/kubernetes.list

Finally, update and install the kubeadm, kubelet and kubectl.

$ sudo apt-get update $ sudo apt-get install -y kubelet kubeadm kubectl

And a good idea would be to ‘hold’ their versions so they don’t get updated accidentally.

$ sudo apt-mark hold kubelet kubeadm kubectl

By the time of writing this article, the current version installed for all the above tools is 1.20.4.

Initializing the cluster

Before finalizing this and initializing the cluster, you should choose which container network interface you will run on the cluster.

That’s because it may require an additional configs or command arguments that will be needed to pass on to kubeadm when creating the cluster.

In my case, I went with the Calico CNI and chose the Pod CIDR range 10.244.0.0/16

The CNI get’s installed after the cluster initializing step.

The other --cri-socket argument is to specify that you will use the containerd CRI.

$ sudo kubeadm init --pod-network-cidr=10.244.0.0/16 --cri-socket=/run/containerd/containerd.sock

After running the command you will see a lot of output, but be patient cause this may take up to 10-15mins.

If all went well you will be greeted by Your Kubernetes control-plane has initialized successfully!

Now copy and paste the three commands provided in the output of kubeadm.

This is to copy over the kubeconfig file in order for you to have access to the cluster.

$ mkdir -p $HOME/.kube $ sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config $ sudo chown $(id -u):$(id -g) $HOME/.kube/config

In the same kubeadm output, the last kubeadm join command is meant to be executed on the worker nodes.

But in my case, that wasn’t needed, since I aimed at creating a single node cluster.

Now if you check on the node(s) with:

$ kubectl get nodes NAME STATUS ROLES AGE VERSION atomicpi-server NotReady control-plane,master 99s v1.20.4

You will see that the status on it/them is `NotReady`, that’s because the cluster requires a container network interface to be installed.

Installing Calico

Installing Calico is extremely simple and requires only two manifest files.

The first one can be straight up deployed with:

$ kubectl create -f https://docs.projectcalico.org/manifests/tigera-operator.yaml

Now the second manifest can be applied immediately if you initialized the cluster with the Calico default pod CIDR range, which is 192.168.0.0/16.

But since I went with a different one, and if you go down that route you will also need to amend the following manifest before deploying it.

# This section includes base Calico installation configuration.

# For more information, see: https://docs.projectcalico.org/v3.18/reference/installation/api#operator.tigera.io/v1.Installation

apiVersion: operator.tigera.io/v1

kind: Installation

metadata:

name: default

spec:

# Configures Calico networking.

calicoNetwork:

# Note: The ipPools section cannot be modified post-install.

ipPools:

- blockSize: 26

cidr: 192.168.0.0/16

encapsulation: VXLANCrossSubnet

natOutgoing: Enabled

nodeSelector: all()

Just replace at the CIDR block, with the range you specified when running the kubeadm init command.

In my case I’ve replaced it with 10.244.0.0/16.

ipPools:

- blockSize: 26

cidr: 10.244.0.0/16

Now with that change in place, save the file and apply it with:

$ kubectl create -f calico.yaml

You can watch the Pods from Calico get created with:

$ kubectl get pods -n calico-system -w

After a while all the pods should be in Running state:

$ kubectl get pods -n calico-system NAME READY STATUS RESTARTS AGE calico-kube-controllers-f95867bfb-bnfrq 1/1 Running 0 57s calico-node-q2227 1/1 Running 0 8m4s calico-typha-57955db586-2zz4s 1/1 Running 0 8m5s

The final step is to remove the taint on the control plane node and enable pods to be scheduled there.

$ kubectl taint nodes --all node-role.kubernetes.io/master- # node/atomicpi-server untainted

The command will remove the taint(s), but beware because this means that pods will be permitted to schedule on the master nodes. If your setup is different and you have separate worker nodes then you should leave the taint as it is.

With this, you have successfully created a single-node Kubernetes cluster with kubeadm and Calico.

Tests

Create a simple deployment with couple of replicas to check if all components are working:

$ kubectl create deployment nginx --image=nginx --replicas=3 # deployment.apps/nginx created

$ kubectl get pods NAME READY STATUS RESTARTS AGE nginx-6799fc88d8-ftl9j 1/1 Running 0 3m25s nginx-6799fc88d8-jg8q5 1/1 Running 0 3m25s nginx-6799fc88d8-wfzsj 1/1 Running 0 3m25s

Voila! The cluster is working, and you have pods running on it.

One more step

Create a service with a type of NodePort to expose the above pods locally on your cluster.

$ kubectl expose deployment nginx --name nginx-svc --port 80 --type NodePort # service/nginx-svc exposed

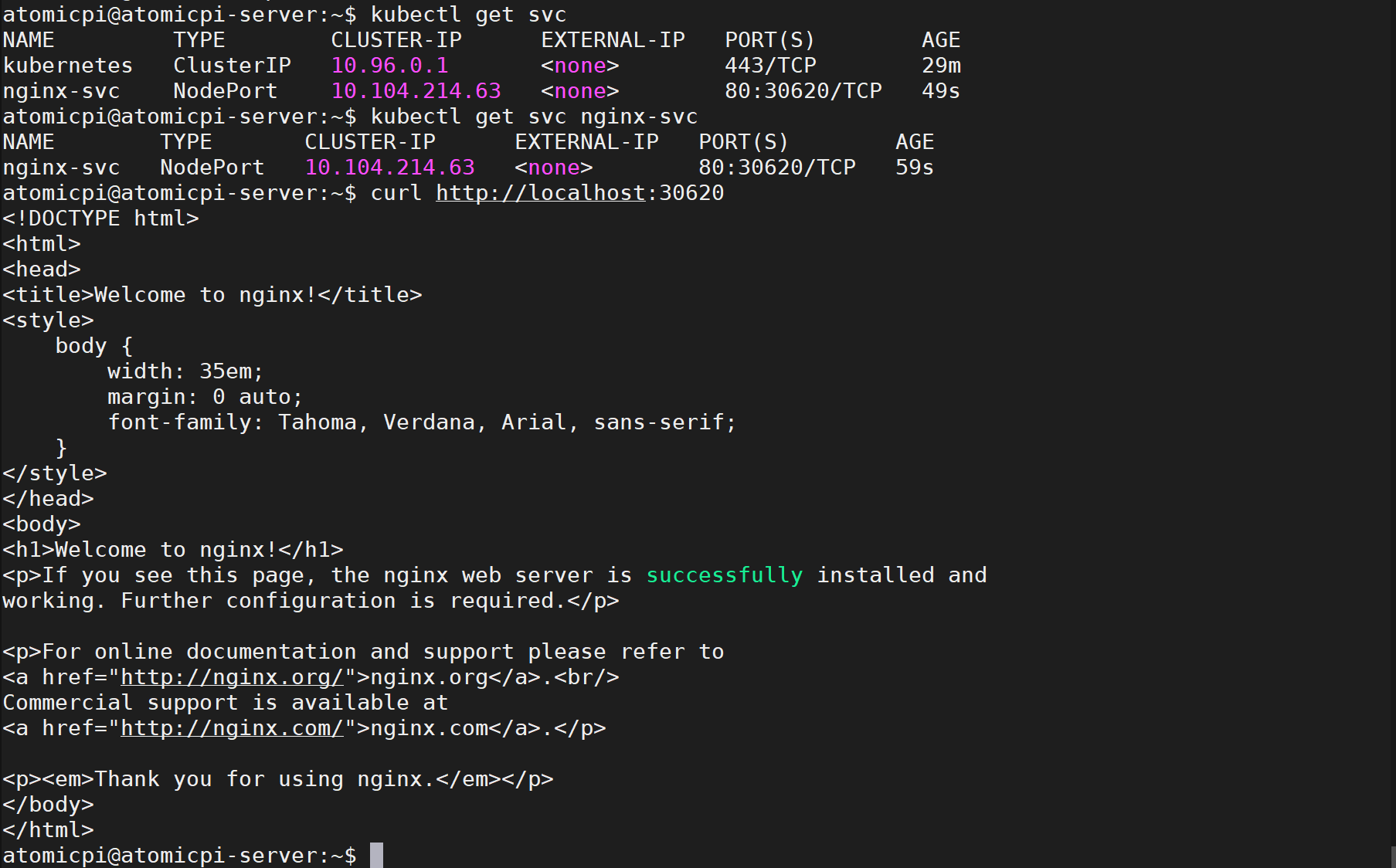

Find the exposed service node port number:

$ kubectl get svc nginx-svc NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE nginx-svc NodePort 10.104.214.63 80:30620/TCP 59s

Now if you curl locally on that port number, you should see the NGINX default page.

$ curl http://localhost:30620 Welcome to nginx! If you see this page, the nginx web server is successfully installed and working. Further configuration is required. For online documentation and support please refer to nginx.org. Commercial support is available at nginx.com. Thank you for using nginx.

Excellent!